|

| Home | Accessibility | TravelWatch Website | Blog articles | The Mouth | forum | Resources | TransWilts! | About Us | Contact |

| For 2023 (and 2024 ...) - we are now fully retired from IT training. We have made many, many friends over 25 years of teaching about Python, Tcl, Perl, PHP, Lua, Java, C and C++ - and MySQL, Linux and Solaris/SunOS too. Our training notes are now very much out of date, but due to upward compatability most of our examples remain operational and even relevant ad you are welcome to make us if them "as seen" and at your own risk. Lisa and I (Graham) now live in what was our training centre in Melksham - happy to meet with former delegates here - but do check ahead before coming round. We are far from inactive - rather, enjoying the times that we are retired but still healthy enough in mind and body to be active! I am also active in many other area and still look after a lot of web sites - you can find an index ((here)) |

|

Monitoring the success and traffic of your web site

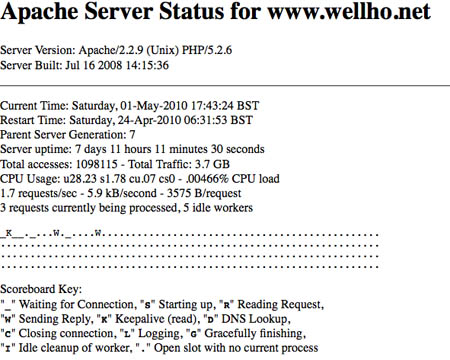

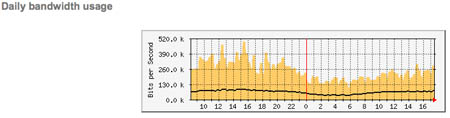

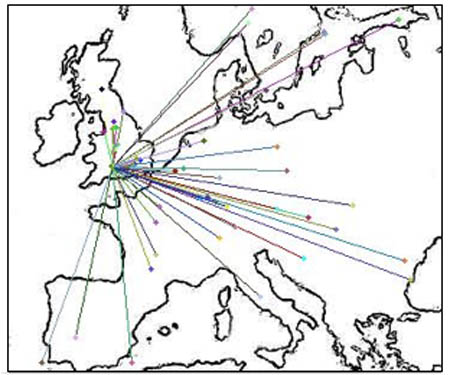

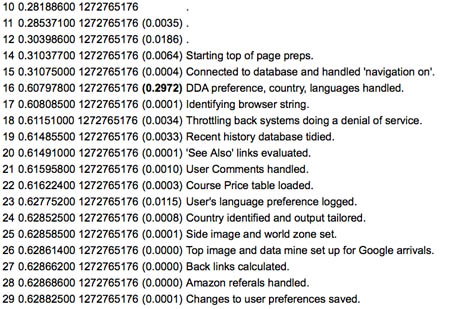

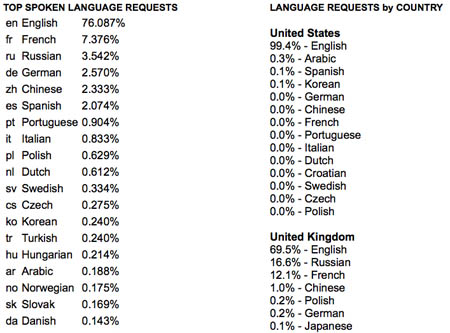

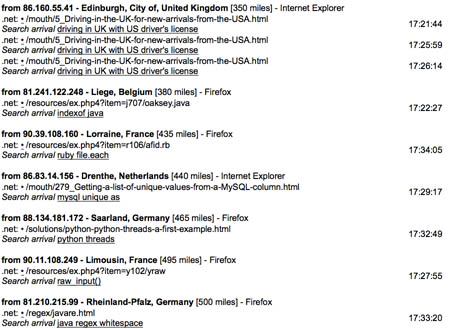

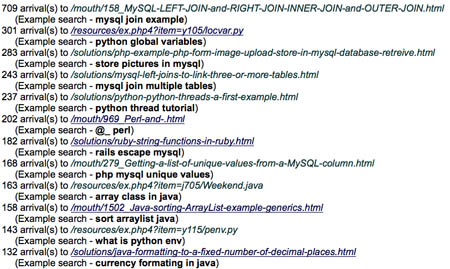

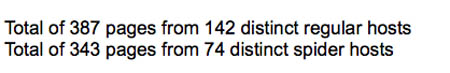

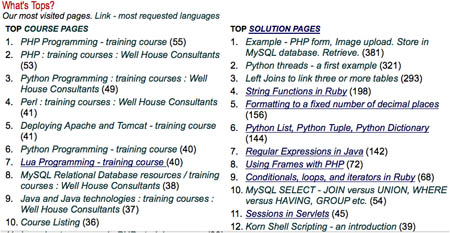

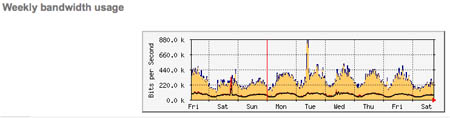

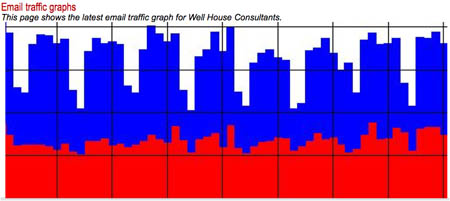

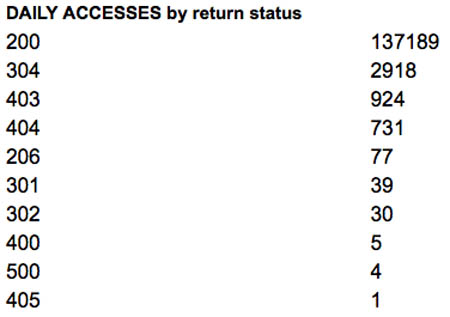

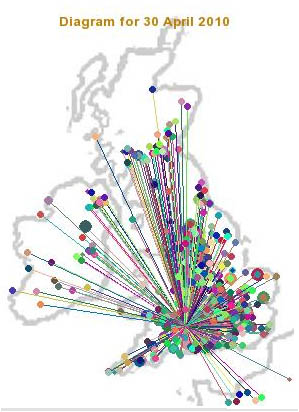

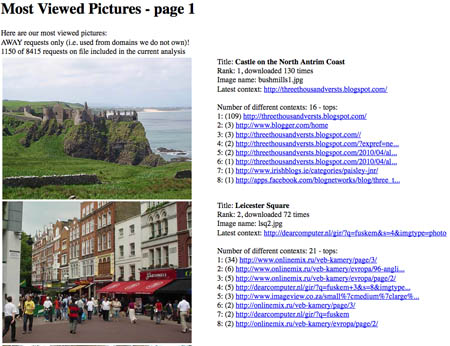

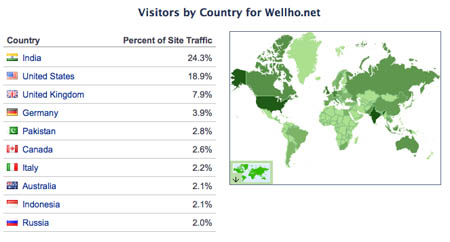

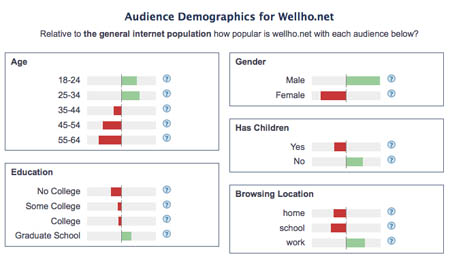

If you're looking after a shopping centre, a major store, or a specialist shop on Bank Street, you'll want to know roughly how many people you've got "in", roughly what they're doing, and whether there's going to be a rush in a few minutes. And so it should be with a website - be it a virtual shop where you're selling products, a virtual museum where you've got exhibits for people to look at, or a virtual street corner / pub where people hang out with their pals. Yet ask a shop owner these questions: * how many people visit your shop each day? * what proportion 'just look' rather than buying? * how many people are in your shop now? * how did thy find out about you? * are they regulars? * where do they come from to see you? You'll usually get an answer based on knowledge and a feel for the business, but ask virtual shop owner and you'll very often find a big gap in his / her knowledge of what's going on. During a recent course, I demonstrated some of the elements of how we keep an eye on our web site, and it's been suggested that others might like an overview. Data Sources 1. At the "heart" of web site monitoring is the web server log file - where each request is recorded when completed; there are several standard record formats, and we use the "combined" formats which generates records lines like this: 66.25.148.102 - - [20/Jan/2010:03:29:54 +0000] "GET /course/tlfull.html HTTP/1.1" 2. These days, many pages on many sites aren't just held there as files that are returned to the user straight from the disc, but rather they're programs which are run each time they're called. And that allows the programmer who's writing web site code to collect other raw information, such as data that is "posted" into applications, cookies that link requests into a session, language preference requests where a user's browser is set to express a preference for responses in French, Korean, Russian ... 3. ISP traffic information. Your web space provider will have information about how busy your server connection, and this information may be available to you; at the very least, you should have "traffic so far this month", and you may have something as immediate as a moving graph too. 4. If you carry advertising material on your site from a third party, you may have access to other data from that third party which gives you further feedback as to where else that same visitor goes - a sort of "pub watch" which will let you establish more about your visitors based on shared intelligence. 5. Search engines such as Google which sent people to your site will have stats as to how many people they send to your site, and also about how many other people link in to your site. 6. Our server is multi-threaded, and we have server-info turned on so that (from certain locations only) we can keep an eye on the number of concurrent requests. And within any programs on our web site we can / could choose to run operating system status commands to tell us how much memory is in use, whether we have queues for our CPU, etc. Our use of those sources We do more than most - but we could usefully do a lot more if we had the time. Rather like CCTV footage, there are huge server logs and information flows that no-one ever looks at! We store log files (for easy management, we split them on a day by day basis) so that we can go back and trouble shoot it we need to. A number of tools on our site analyse the most recently closed log file, so that I can look back and tell you what happened yesterday - that's good for ongoing marketing, watching trends, identifying popular pages, etc. The majority of our html and image pages are served via scripts / programs, and common header files used on each page save some of the information that goes into the log file anyway into a MySQL database. Information can be efficiently deleted from a database from the beginning - and indeed each new request deletes records that are over 15 minutes old, giving us the data that's behind our "currently on our site" reporting capability. We take a one-line CPU status and discreetly place it onto a sprinkling of pages to that we can very quickly get an idea of how our server is coping / whether we have a flood of unusual activity (but - note - this will NOT tell us about the visitor who's quietly doing something very nasty in the corner!) Let's see some diagrams and samples:  This is a very short term display that shows the parallel operations that the web server is running - a snapshot showing connections which are waiting, transferring data, in "keepalive" mode, etc. This is a very short term display that shows the parallel operations that the web server is running - a snapshot showing connections which are waiting, transferring data, in "keepalive" mode, etc. From our WSP - transfer rates of data throughout the last 24 hour period from our server From our WSP - transfer rates of data throughout the last 24 hour period from our server A diagram showing where (in Europe) visitors have arrived from in the last 15 minutes. A diagram showing where (in Europe) visitors have arrived from in the last 15 minutes. Sometimes, a site can perform poorly as database tables grow beyond a certain size / other resources start maxing out. This is an internal page that we run which goes through a variety of header scripts, benchmarks them, and tells us where the time's being taken Sometimes, a site can perform poorly as database tables grow beyond a certain size / other resources start maxing out. This is an internal page that we run which goes through a variety of header scripts, benchmarks them, and tells us where the time's being taken What languages do people request in their browsers, and in which countries (as a site in English, presenting courses in English, we do of course get biased results here when compared to the internet as a whole) What languages do people request in their browsers, and in which countries (as a site in English, presenting courses in English, we do of course get biased results here when compared to the internet as a whole) The size of our daily log file, graphed over the last month The size of our daily log file, graphed over the last month Information about visitors who have been on our site in the last 15 minutes, tracking how they are moving from page to page - an interesting study! Information about visitors who have been on our site in the last 15 minutes, tracking how they are moving from page to page - an interesting study! Top search engine landing pages, and a sampling of the search terms that took people to each of them. We have the capability of reporting on every search term here! Top search engine landing pages, and a sampling of the search terms that took people to each of them. We have the capability of reporting on every search term here! Quick summary of activity for last 15 minutes Quick summary of activity for last 15 minutes Top accesses for the last 24 hours, across various sections of the site Top accesses for the last 24 hours, across various sections of the site Another diagram from our WSP, showing the traffic from our dedicated server for the last week. Another diagram from our WSP, showing the traffic from our dedicated server for the last week. Country by Country - where the accesses to our web site came from in the last 24 hour log file Country by Country - where the accesses to our web site came from in the last 24 hour log file And let's not forget email traffic levels - potentially genuine emails in one colour, rejected as spam in another. As a sales and marketing operation, our filters let through emails if there is any doubt - that subject line of "hi" or a mis-spelled "enquery" could be a potential customer. And let's not forget email traffic levels - potentially genuine emails in one colour, rejected as spam in another. As a sales and marketing operation, our filters let through emails if there is any doubt - that subject line of "hi" or a mis-spelled "enquery" could be a potential customer. The log file for the last 24 hours again, but this time summarised as the number of times each http status was returned. 200 - good response, by the way! The log file for the last 24 hours again, but this time summarised as the number of times each http status was returned. 200 - good response, by the way! In the 24 hour file, which UK locations were represented? In the 24 hour file, which UK locations were represented? Which images were most used from our web site (this particular screen capture shows pictures that are hot-linked - i.e. where our image is called up via our bandwidth to someone else's site, without our permission! Which images were most used from our web site (this particular screen capture shows pictures that are hot-linked - i.e. where our image is called up via our bandwidth to someone else's site, without our permission!We also have email alerts (not illustrated) and database captures of some unusual activities, and a limiter which will cap denial of service attacks - again, not illustrated here. Other available information The illustrations in the previous sections are just samples ... and further displays and tools such as analog, awstats, googlestats, are available to us. And at times of extreme pressure, we can go back to the raw log files and write a utility in Perl (still the fastest for me personally in an emergency), Python (better for something that's going to grow into something we'll keep) or PHP (if we want to integrate it to our system and make a nice display of it. Tools such as ab and Jmeter provide for further, immediate, server testing. You may be surprised how much public information is available too - on any popular web site - through places such as Alexa. Here are some screen captures of our current Alexa Ratings - I have compared wellho.net with a forum we run at the First Great Western Coffee Shop.  Pages per visitor - this is a graph which compares two of our sites on this statistic Pages per visitor - this is a graph which compares two of our sites on this statistic Which countries of the world generate traffic to our web site - with a map that's coloured / weighted by the relative internet population of each country Which countries of the world generate traffic to our web site - with a map that's coloured / weighted by the relative internet population of each country Some demographic information which may give an idea of how our visitor base differs from an average mix of internet users. Some demographic information which may give an idea of how our visitor base differs from an average mix of internet users. Where users arrive from - the previous sites they have visited Where users arrive from - the previous sites they have visitedHoles in the available information As well as being aware of the information we have, we should be aware of the information we do NOT have, or do not notice. In shop terms, that's the people who walk up our street but don't come in to our door, or don't even come to our town because they don't know that we exist. Those latter may be somewhat hinted at by Google Adwords suggestions; the former are rather trickier, but making sure that your site is W3C compliant, works on all browsers, etc, can rather blindly help you reduce the number of people who see you're there but walk on by. Accesses which are small(er) in number, but looking for holes in the website or otherwise "nasty" may be overlooked by the information flows I have described as well; we are aware of many (indeed, I show delegates the sort of thing I mean during our courses), and we do keep a watching eye open. "First principles" security helps, but with a site as (over) complex as ours, occasional new issues will crop up and it's a question of identifying each rather that being able to have a generic "catch all". We have no easy way of knowing when information from our site is cloned to another site (but we can and do pick up hot links). If we suspect that a particular page has been cloned, a search for a chunk of text that's in it will often reveal the copy/s - but there's no generic tool. There are sites (but they charge) that look for plagiarism for colleges which could be useful. On sites which welcome public contributions, "Forum naughty"s need to be monitored. Again, that's something that has to be a somewhat manual process - we have a whole lot of resources about moderating forums [here] Pinches of Salt You may have heard the saying "there are lies, damned lies and statistics" - and a lot of what we are looking at in this article is statistics. They are also automated - so at times odd things will crop up. How many hits does a website have? Looking at the logs for yesterday - how busy is our web site? Do we shout out about and monitor 103000 accesses per day (that's the number of records in our log file on a quiet bank holiday Saturday), 45500 requests for "proper" pages such as .html and .php ones, 7000 that were identified as being landing pages from search engines. Or should we look at unique IP addresses? Should we eliminate "George Inn, Mere, Wiltshire" which landed one user [here] and Southwest Online Booking which brought someone [here] when they were probably looking to purchase airline tickets? Mind you - I was surprised to read in one place "Wellho.net provide charter flights to and from Bristol Airport". Conclusion There's no easy "one size fits all" to monitor - for security and marketing purposes - the running of your web site. But there are many tools available to you to help you see and understand what's going on. The data available to you is voluminous, but incomplete ... and as well as the standard tools, you can usefully produce your own to help you gain understanding. This article was inspired by a delegate from a course a couple of weeks back where I was showing off / taking about some of our tools. And since then I have done further detailed analysis using these tools and others to look at different customer's site, to help her understand her customer base and traffic patterns. So people do want to know. The article does NOT, though, form the basis of a public course at present - elements are on some of our courses, though, and we can set up a more specific consultancy day if you would like me to look at your site and make comment. Best contact? - email - graham@wellho.net. (written 2010-05-01, updated 2010-05-04) Associated topics are indexed as below, or enter http://melksh.am/nnnn for individual articles Z408 - TravelWatch SouthWest[1560] HST Power Car TravelWatch SouthWest - (2008-03-01) [1966] Background to the TransWilts Train Fiasco - (2008-12-29) [2438] Listening to The Minister - (2009-10-05) [2664] Oliver Cromwell at Bristol Temple Meads - (2010-03-06) [2666] Random thoughts on Melksham Town Planning and development - (2010-03-08) [2954] Railway meetings, trips and meetups this autumn - (2010-09-12) [3194] Buses - what they cost and their future direction in the SW and in Wiltshire - (2011-03-06) [3221] How long is a speech? - (2011-03-29) [3677] Some advise for guest speakers at meetings - (2012-03-31) [3882] Community Transport - Pewsey, Taunton, and the whole picture too - (2012-10-06) [3904] Want to help us improve transport in Wiltshire? Here is how! - (2012-10-26) [4040] Report on the last year - Melksham Railway Developement Group for Melksham Without Parish Council - (2013-03-11) [4173] Train and Rail Travel - who runs it and where do I ask questions? - (2013-09-13) [4185] TransWilts Trains - running a successful campaign talk - (2013-10-05) [4186] Melksham to Bath and Zigzag buses - at a turning point? - (2013-10-06) [4188] Extended Weekend - but not a quiet one! - (2013-10-07) [4397] TransWilts / Press and Publicity report for AGM / 30th January 2015 - (2015-01-17) [4424] Looking Forward - TransWilts Community Rail Partnership and TransWilts CIC - (2015-02-12) G911 - Well House Consultants - Search Engine Optimisation [165] Implementing an effective site search engine - (2005-01-01) [427] The Melksham train - a button is pushed - (2005-08-28) [1015] Search engine placement - long term strategy and success - (2006-12-30) [1029] Our search engine placement is dropping. - (2007-01-11) [1344] Catching up on indexing our resources - (2007-09-10) [1793] Which country does a search engine think you are located in? - (2008-09-11) [1969] Search Engines. Getting the right pages seen. - (2009-01-01) [1971] Telling Google which country your business trades in - (2009-01-02) [1982] Cooking bodies and URLs - (2009-01-08) [1984] Site24x7 prowls uninvited - (2009-01-10) [2000] 2000th article - Remember the background and basics - (2009-01-18) [2019] Baby Caleb and Fortune City in your web logs? - (2009-01-31) [2045] Does robots.txt actually work? - (2009-02-16) [2065] Static mirroring through HTTrack, wget and others - (2009-03-03) [2106] Learning to Twitter / what is Twitter? - (2009-03-28) [2107] How to tweet automatically from a blog - (2009-03-28) [2137] Reaching the right people with your web site - (2009-04-23) [2324] What search terms FAIL to bring visitors to our site, when they should? - (2009-08-05) [2330] Update - Automatic feeds to Twitter - (2009-08-09) [2428] Diluting History - (2009-09-27) [2552] Web site traffic - real users, or just noise? - (2009-12-26) [2562] Tuning the web site for sailing on through this year - (2010-01-03) [2686] Freedom of Information - consideration for web site designers - (2010-03-20) [3670] Reading Google Analytics results, based on the relative populations of countries - (2012-03-24) [3746] Google Analytics and the new UK Cookie law - (2012-06-02) [4121] Has your Twitter feed stopped working? Switching to their new API - (2013-06-23) Some other Articles

Voting day - UK General ElectionGoing off at a tangent, for a ramble Views of Wessex Delegate Question - defining MySQL table relationships as you create the tables Monitoring the success and traffic of your web site Containment, Associative Objects, Inheritance, packages and modules Model - View - Controller demo, Sqlite - Python 3 - Qt4 Connecting Python to sqlite and MySQL databases PyQt (Python and Qt) and wxPython - GUI comparison Public Open Source Training Courses running this summer and autumn in Melksham |

4759 posts, page by page

Link to page ... 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33, 34, 35, 36, 37, 38, 39, 40, 41, 42, 43, 44, 45, 46, 47, 48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 64, 65, 66, 67, 68, 69, 70, 71, 72, 73, 74, 75, 76, 77, 78, 79, 80, 81, 82, 83, 84, 85, 86, 87, 88, 89, 90, 91, 92, 93, 94, 95, 96 at 50 posts per pageThis is a page archived from The Horse's Mouth at http://www.wellho.net/horse/ - the diary and writings of Graham Ellis. Every attempt was made to provide current information at the time the page was written, but things do move forward in our business - new software releases, price changes, new techniques. Please check back via our main site for current courses, prices, versions, etc - any mention of a price in "The Horse's Mouth" cannot be taken as an offer to supply at that price.

Link to Ezine home page (for reading).

Link to Blogging home page (to add comments).

PH: 01144 1225 708225 • EMAIL: info@wellho.net • WEB: http://www.wellho.net • SKYPE: wellho

PAGE: http://www.wellho.net/mouth/2748_Mon ... -site.html • PAGE BUILT: Sun Oct 11 16:07:41 2020 • BUILD SYSTEM: JelliaJamb